|

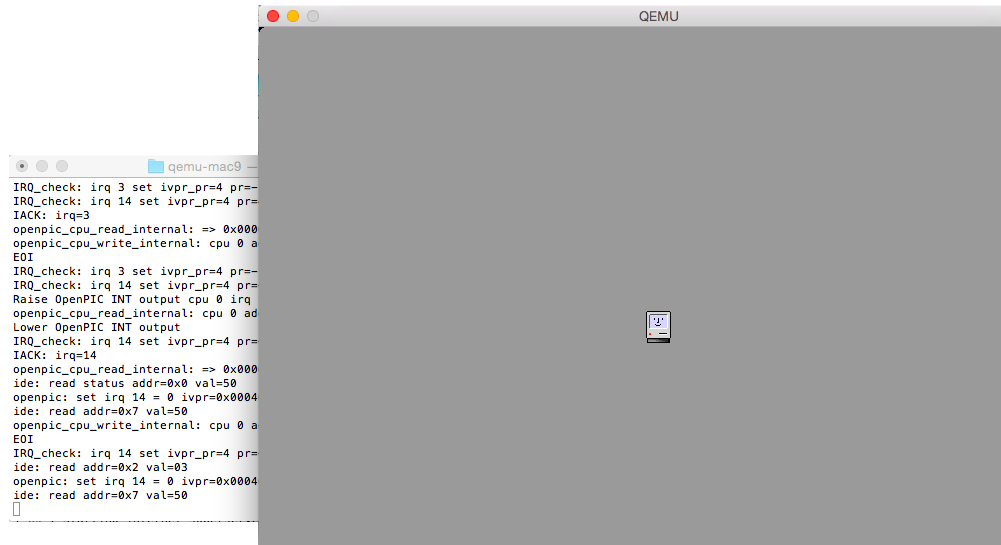

Supported Mac platforms: macOS 10.13. Remote debugging from a macOS host to other CUDA enabled targets, however, is supported. Native macOS debugging is not supported in this release. This tar archive holds the distribution of the CUDA 11.4 cuda-gdb debugger front-end for macOS. We have developed GPU implementations of some of the PARSEC benchmarks in CUDA.Instructions for installing cuda-gdb on the macOS.Microsoft (R) C/C++ Optimizing Compiler Version 7.1 for x64. Specific versions of tools working together for me are: C compiler, installed with Visual Studio 2017, cl.exe. Download both from the NVIDIA download page. Feature and functionality builds on the foundation of the CUDA 4.1 release which introduced: A new LLVM-based CUDA compiler 1000+ new image processing functions RedesignedInstall the NVIDIA graphics driver and CUDA drivers.This technology is referred to as WDDM GPU Paravirtualization, or GPU-PV for short. This technology is integrated into WDDM (Windows Display Driver Model) and all WDDMv2.5 or later drivers have native support for GPU virtualization. GPU VirtualizationOver the last few Windows releases, we have been busy developing client GPU virtualization technology. Also install Ocelot which cross compiles CUDA programs to CPU and does emulationThe purpose of this blog is to give you a glimpse of how this support is achieved and how the various pieces fit together. In response to popular demand, these Linux applications and tools can now benefit from GPU acceleration.The best way to learn CUDA will be to do it on an actual NVIDIA GPU. If you are a developer working on containerized workload that will be deployed in the cloud inside of Linux containers, you can develop and test these workloads locally on your Windows PC using the same native Linux tools you are accustomed to./dev/dxg exposes a set of IOCTL that closely mimic the native WDDM D3DKMT kernel service layer on Windows. Introducing dxgkrnl (Linux Edition)Dxgkrnl is a brand-new kernel driver for Linux that exposes the /dev/dxg device to user mode Linux. The projected abstraction of the GPU follows closely the WDDM GPU abstraction model, allowing API and drivers built against that abstraction to be easily ported for use in a Linux environment. This is achieved through a new Linux kernel driver that leverages the GPU-PV protocol to expose a GPU to user mode Linux. Windows running inside of a VM or container.To bring support for GPU acceleration to WSL 2, WDDMv2.9 will expand the reach of GPU-PV to Linux guests. Today this technology is limited to Windows guests, i.e.

The D3D12 API can be used for offscreen rendering and compute, but there is no swapchain support to copy pixels directly to the screen (yet □).DxCore (libdxcore.so) is a simplified version of dxgi where legacy aspects of the API have been replaced by modern versions. There is currently no presentation integration with WSL as WSL is a console only experience today. The only exception is Present(). It offers the same level of functionality and performance (minus virtualization overhead). Libd3d12.so is compiled from the same source code as d3d12.dll on Windows but for a Linux target. As we work on upstreaming this new driver, source code is available in Microsoft’s official Linux kernel branch for WSL 2.Projecting a WDDM compatible abstraction for the GPU inside of Linux allowed us to recompile and bring our premiere graphics API to Linux when running in WSL.This is the real and full D3D12 API, no imitations, pretender or reimplementation here… this is the real deal.

We brought DirectML’s performant machine learning inferencing capabilities to Linux and expanded its functionality in support of training workflows too! DirectML sits on top of our D3D12 API and provides a collection of compute operations and optimizations for machine learning workloads.The DirectML team has a goal of integrating these hardware accelerated inferencing and training capabilities with popular ML tools, libraries, and frameworks. If you have a WDDMv2.9 driver… the GPU magically shows up in WSL and becomes fully usable.In addition to D3D12 and DxCore, we ported our machine learning API, DirectML to work on Linux when running in WSL. The host driver package is mounted inside of WSL at /usr/lib/wsl/drivers and directly accessible to the d3d12 API. WDDMv2.9 drivers will carry a version of the DX12 UMD compiled for Linux. This support is being integrated in upcoming WDDMv2.9 drivers such that GPU support in WSL is seamless to the end user. Dmg not openingThis is why we are excited to announce training with DirectML will enter preview starting this summer!In order to make it even easier for our customers to get started training with DirectML, we are releasing a preview package of TensorFlow with an integrated DirectML backend. Utilizing DirectML provides these students and beginners with a simple path to leverage hardware acceleration in their existing systems, by tapping into their DirectX 12 capable GPU from their Linux-based ML tools running inside WSL 2.As we’ve invested in expanding DirectML’s capabilities, the awesome co-engineering with our silicon partners has been essential for ensuring the breadth of GPUs in the Windows ecosystem benefit from these ML focused investments. We want to ensure university students and in-industry engineers can leverage the breadth of Windows hardware to learn and gain new ML skills. Cuda Emulator Update Mesa InBesides, we can’t reveal all our plans at one time □. For distros picking up this Mesa update, acceleration will automatically be enabled whenever a WDDMv2.9 driver or above is installed on the Windows host.What about Vulkan? We are still exploring how best to support Vulkan in WSL and will share more details in the future. After our work is done, WSL distro will need to update Mesa in order to light up this acceleration. We will be using these layers to provide hardware accelerated OpenGL and OpenCL to WSL through the Mesa library. OpenGL, OpenCL & VulkanWe have recently announced work on mapping layers that will bring hardware acceleration for OpenCL and OpenGL on top of DX12. Plus, we’re engaging with TensorFlow community and are going through the RFC process! Once the preview is publicly available, we will continue to make investments, adding new functionality to DirectML as well as continue to improve on its end-to-end training capability with TensorFlow, making your training workflows even better.If you are interested in a sneak-peak of DirectML hardware acceleration for training in action, check out the //build Skilling Session titled Windows AI: hardware-accelerated ML on Windows devices. Download rar extractor for macThis is a fully functional version of libcuda.so which enables acceleration of CUDA-X libraries such as cuDNN, cuBLAS, TensorRT.Support for CUDA in WSL will be included with NVIDIA’s WDDMv2.9 driver.

0 Comments

Leave a Reply. |

AuthorAmber ArchivesCategories |

RSS Feed

RSS Feed